Physicalization of AR/XR Experiences

Building tools to understand how AR behaves in real environments

Designing AR experiences required understanding how the system behaves in context

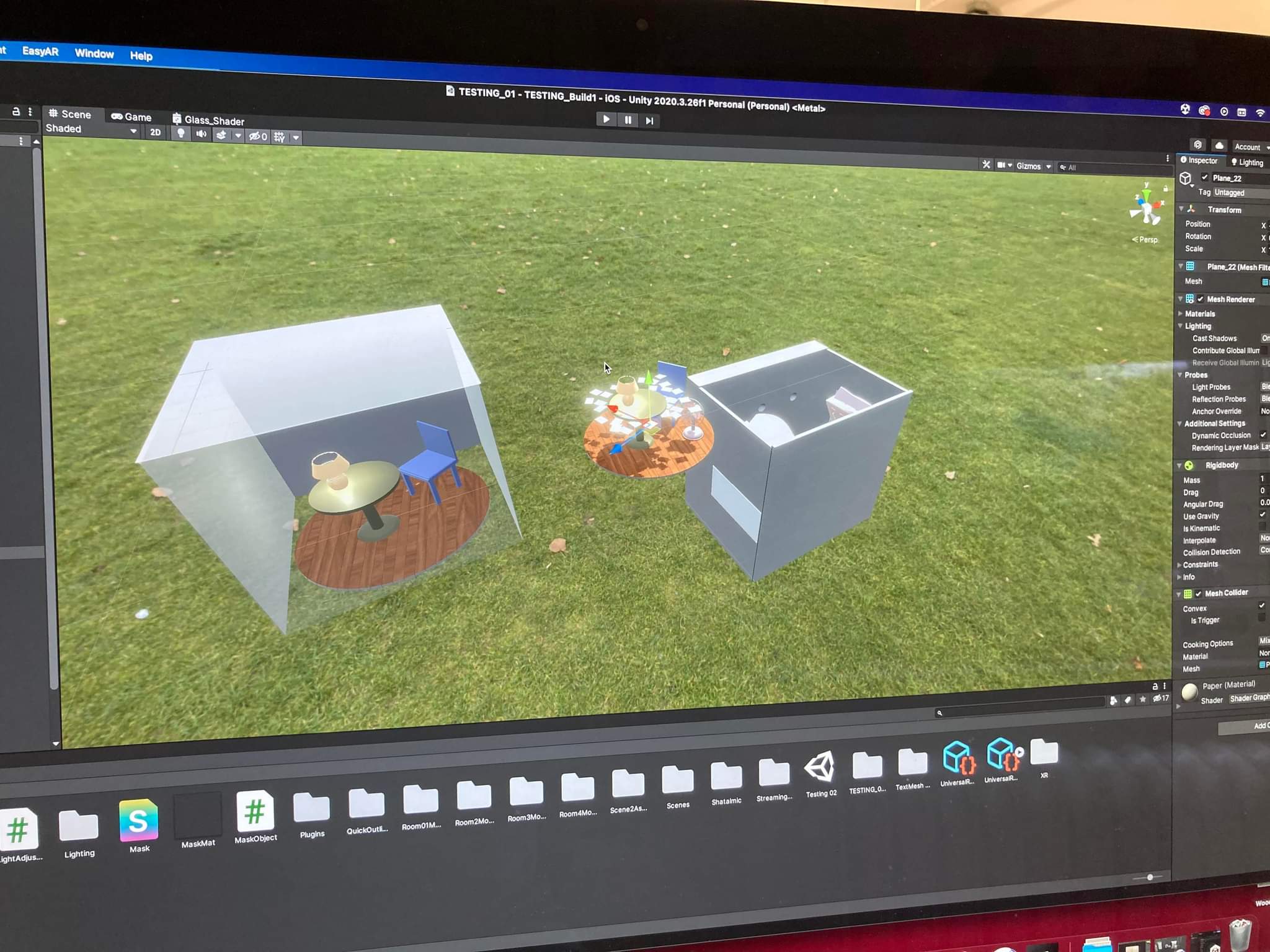

Early in the project, I tried to design a complete AR experience. The prototype exposed issues with tracking stability, lighting conditions, and alignment between physical and digital elements.

Most of the learning came from building and testing rather than designing the final experience. That shifted the direction of the project.

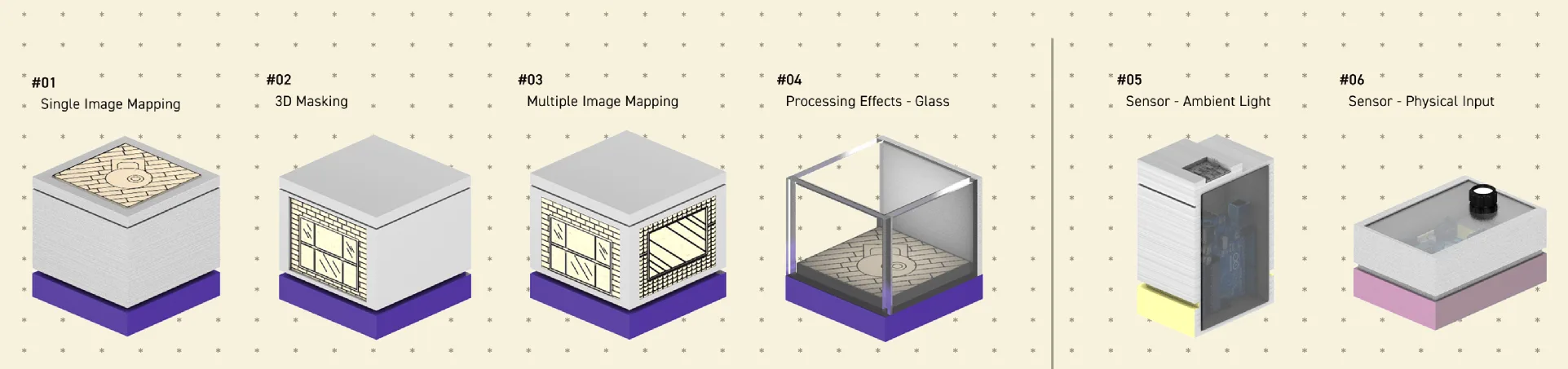

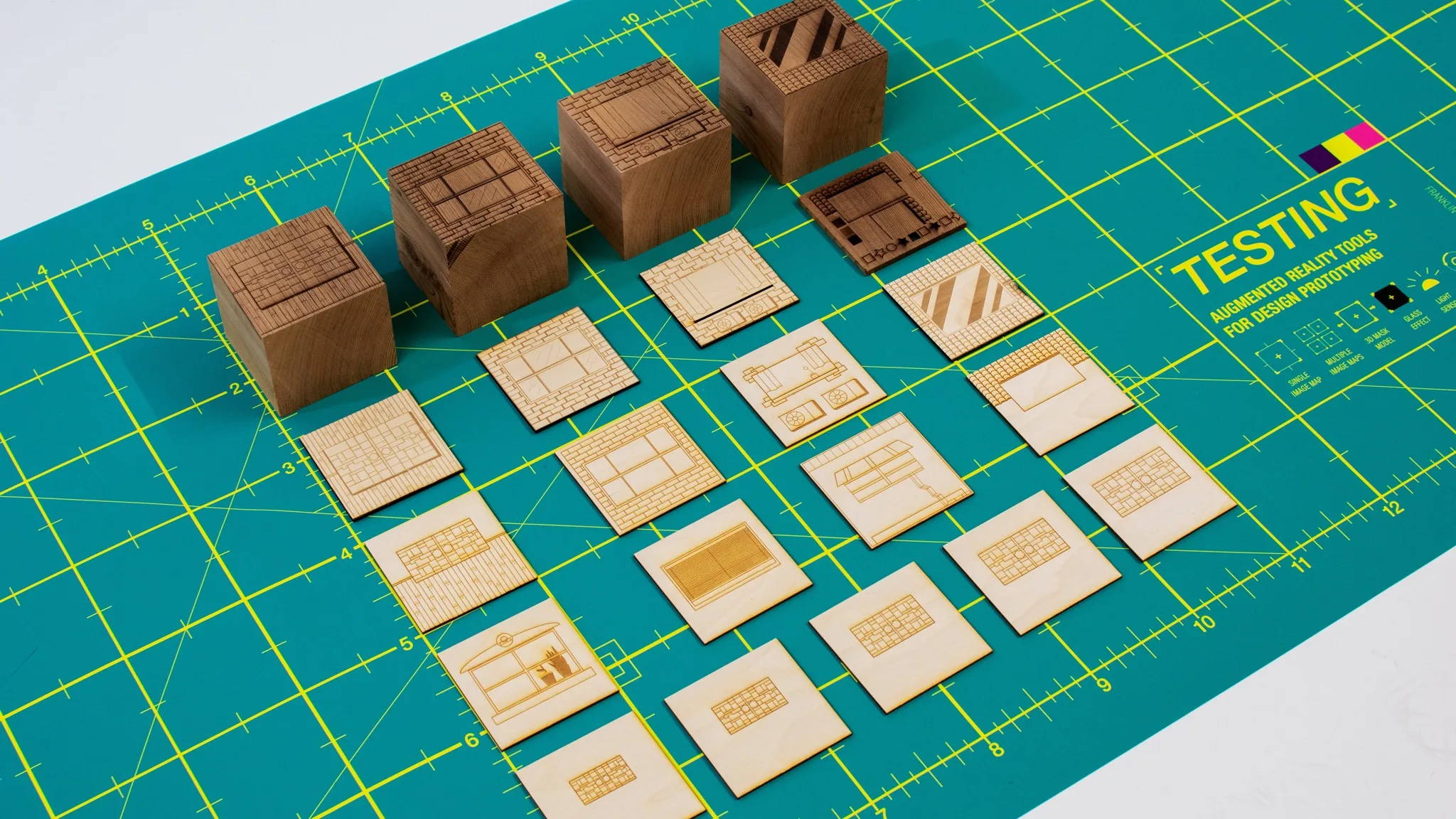

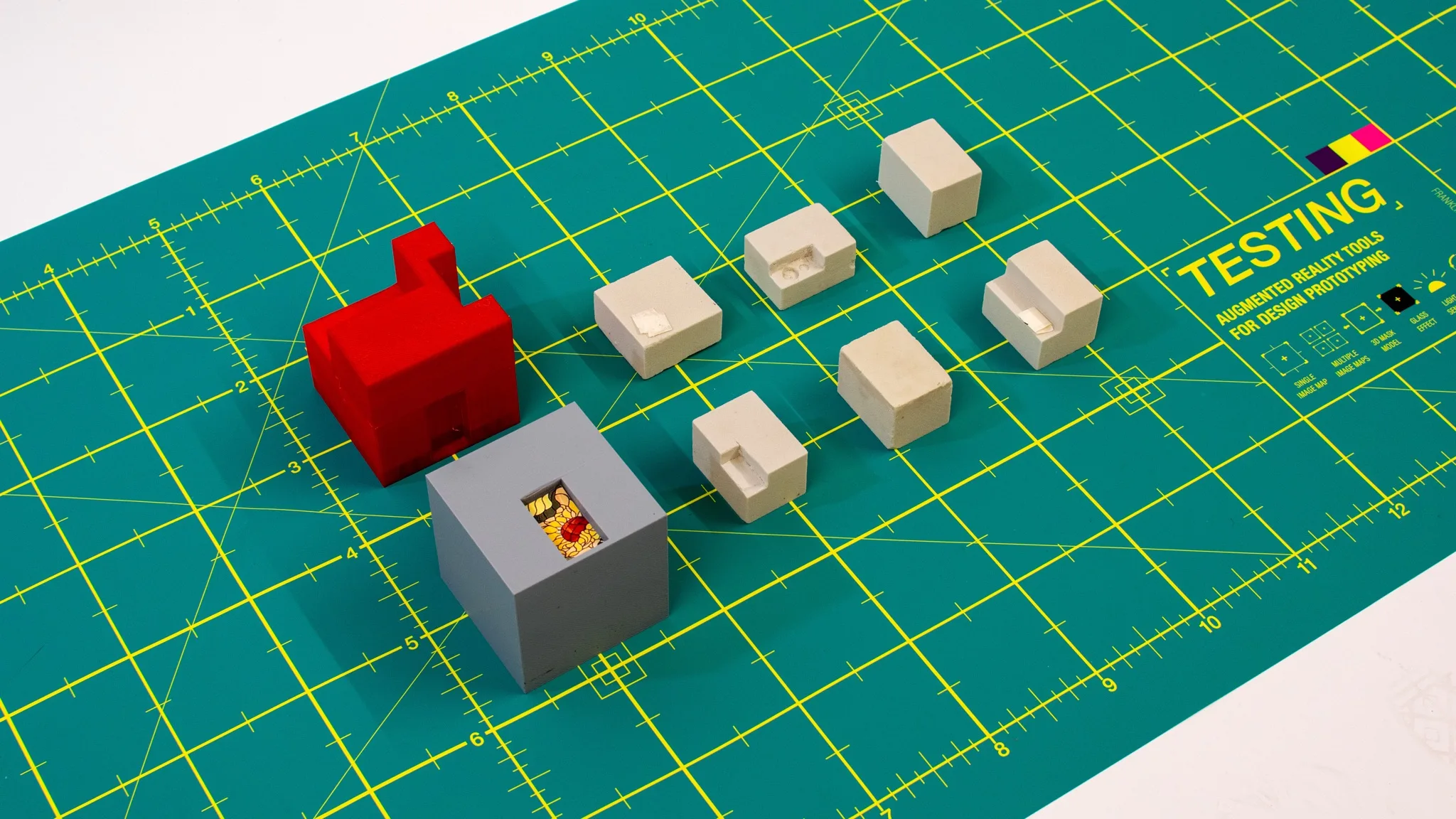

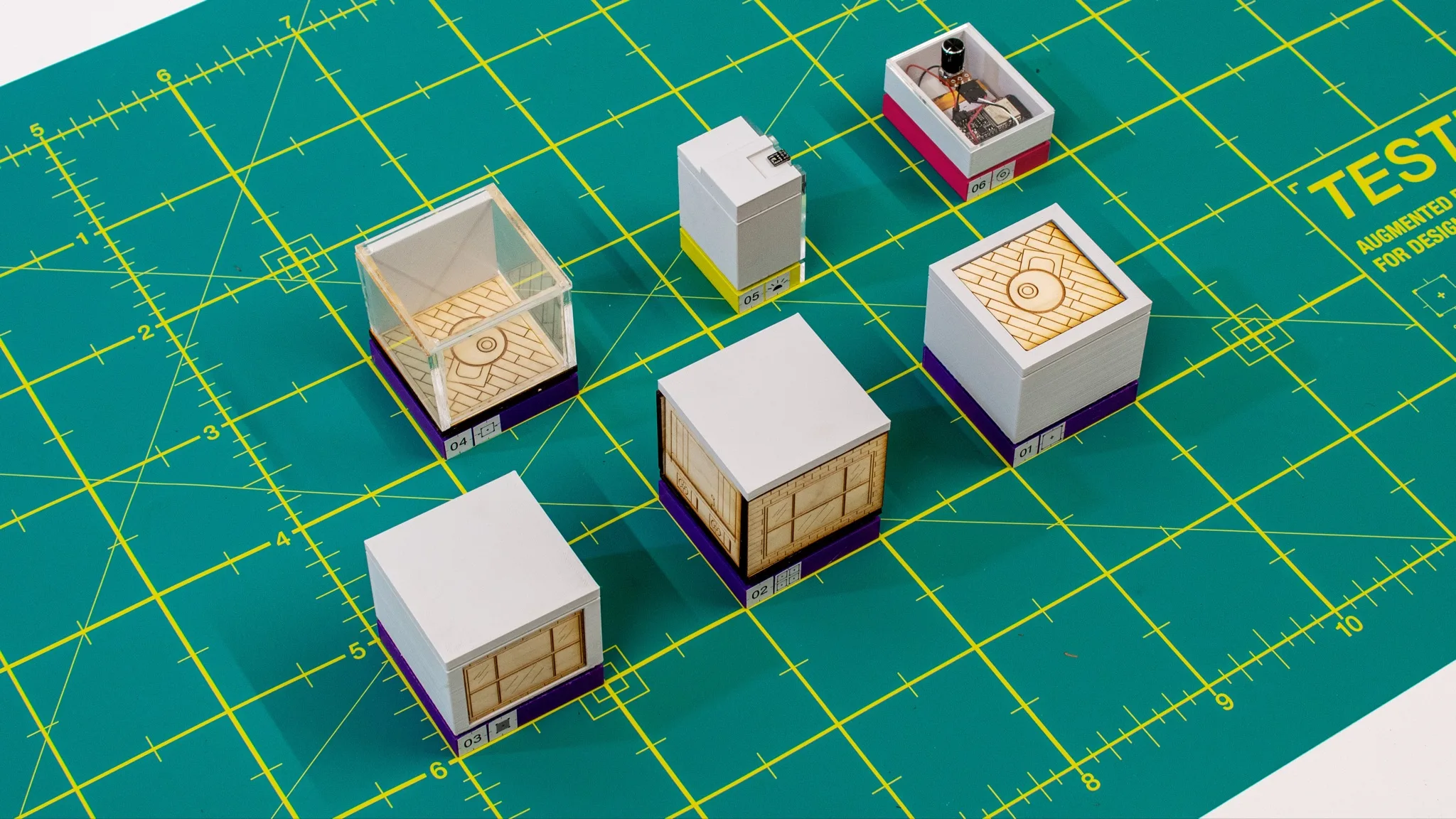

I built six physical tools, each focused on a specific AR mechanic, to make these behaviors easier to test and reason about during design.

A set of six tools for testing how AR behaves

The final system includes six physical tools, each designed to isolate and test a specific AR mechanic: tracking, occlusion, visual effects, and physical input.

Each tool combines physical fabrication, image targets, and real-time AR interaction.

Six tools, six AR behaviors

Each prototype isolates one behavior so it can be tested directly.

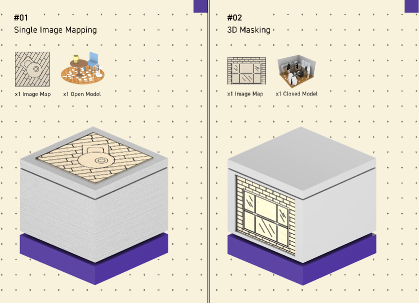

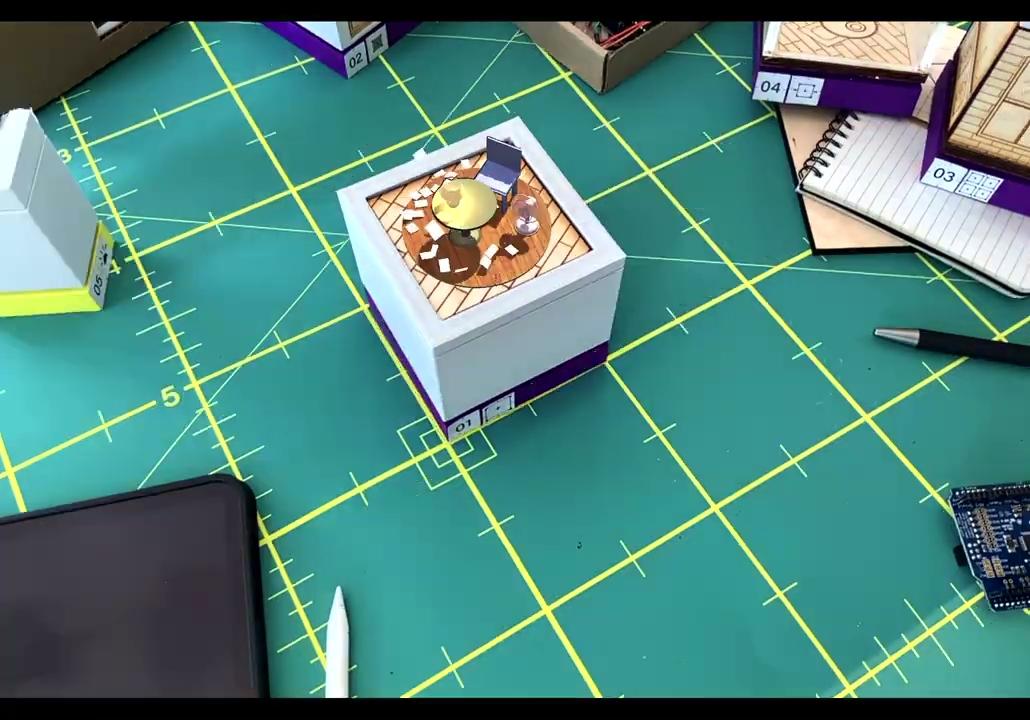

Single Image Mapping

Tests how surface finish, contrast, and printed detail affect tracking. Matte printed targets held more consistently, while reflective or low-contrast surfaces failed more often.

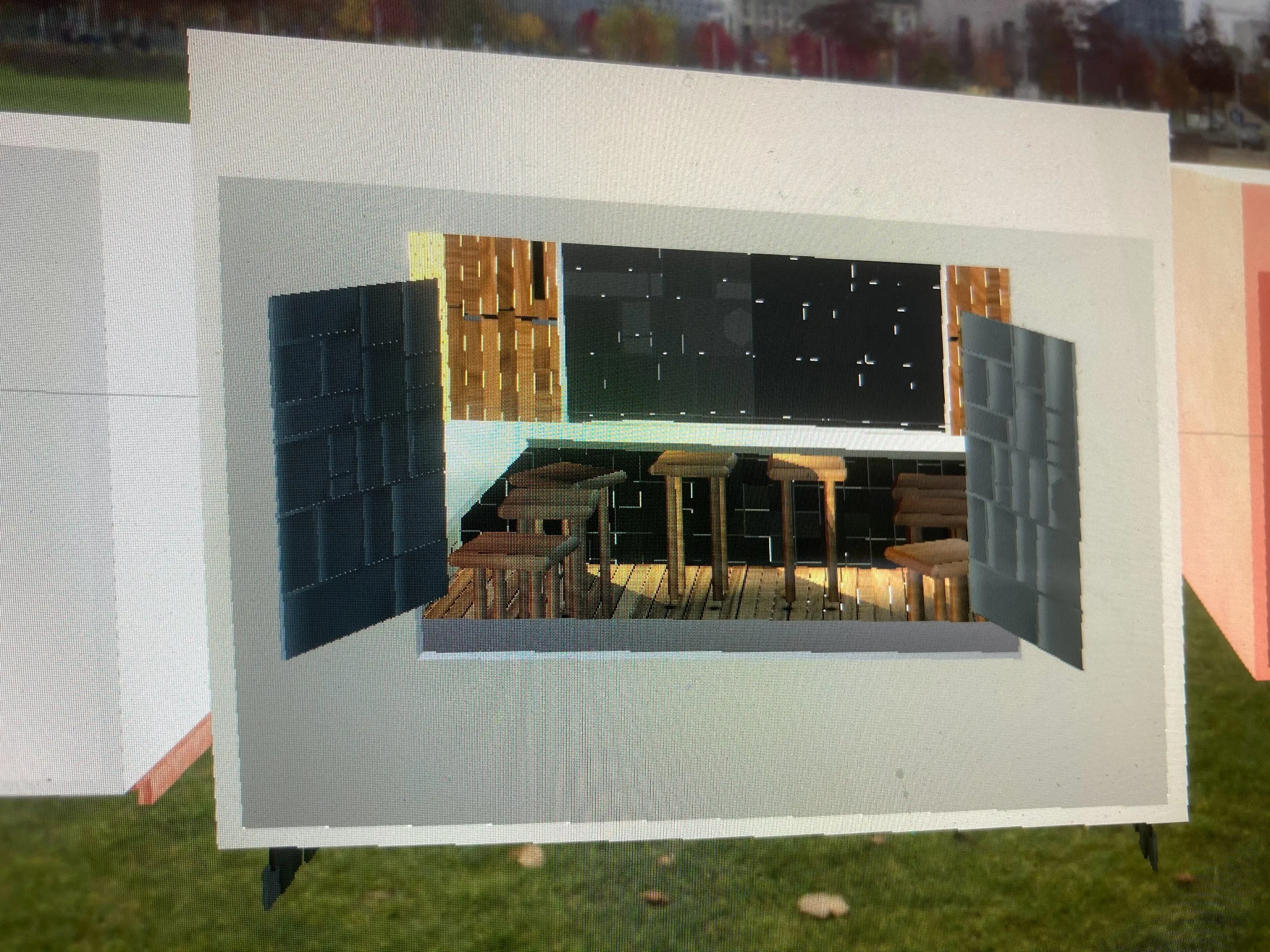

3D Masking

Tests how physical geometry can hide and reveal virtual content. Small mismatches between object and model made the illusion break quickly.

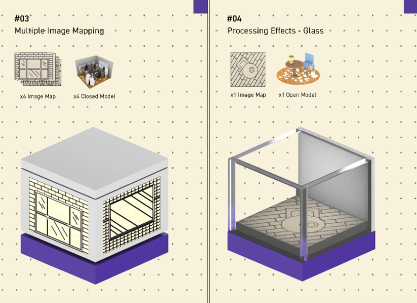

Multiple Image Mapping

Tests how several image targets behave together. Spacing, target size, and angle changed how coherent the virtual layer felt.

Processing Effects: Glass

Applies a shader effect to the live camera feed. The test showed how visual processing changes perception without changing physical geometry.

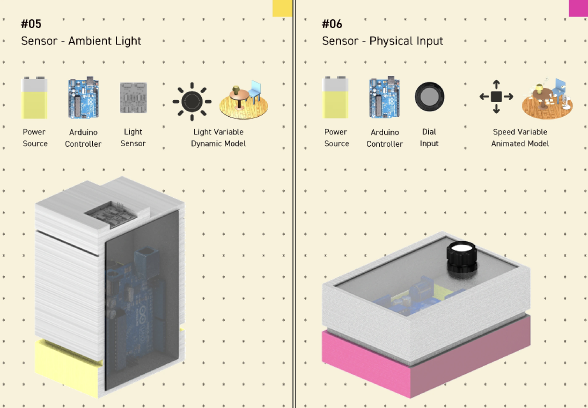

Reactive Lighting: Light Sensor

Uses ambient light as input, connecting a physical sensor to a virtual lamp. Environmental conditions became part of the interaction model.

Physical Control: Potentiometer

Maps an analog dial to the speed of a virtual fan. The connection between physical input and digital response became direct and easy to understand.

AR behavior changes depending on environment, material, and setup

Image tracking depends on surface quality and contrast. Occlusion depends on physical form. Lighting conditions affect reliability. Small changes in setup can produce very different results.

These factors were difficult to evaluate early because they only became visible during implementation.

In practice, tracking dropped when surfaces were too reflective. Occlusion broke when geometry was misaligned. Lighting changes affected consistency across sessions.

The challenge was creating a way to test these behaviors earlier and more consistently.

The system was shaped by a few key constraints

Surface quality and contrast determine stability.

Geometry must align precisely with digital models.

Lighting conditions affect consistency.

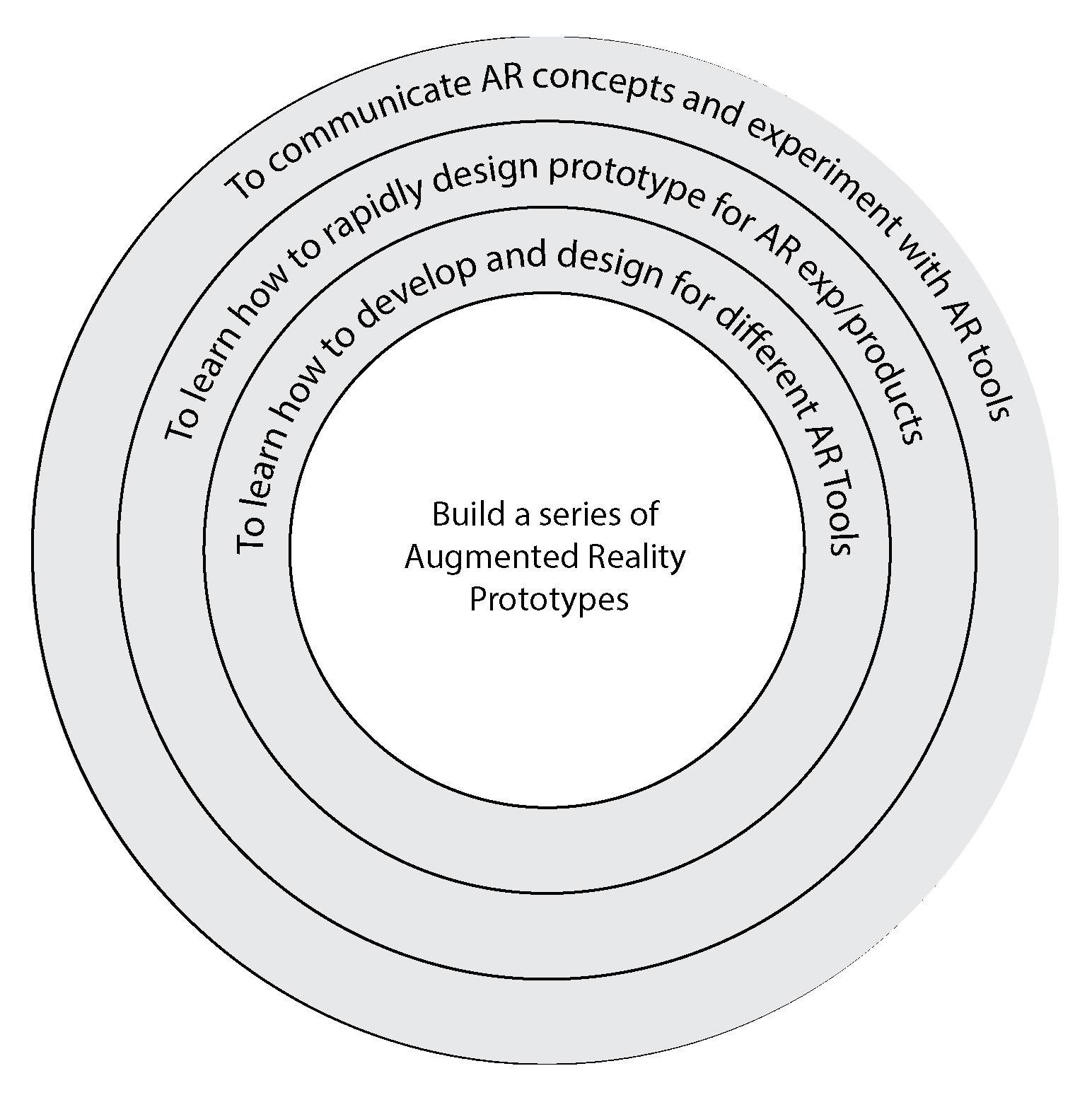

The project shifted from a single experience to a reusable testing system

The initial concept focused on a narrative AR experience built around physical objects. The first prototype showed that reliability of the underlying mechanics was the limiting factor.

I shifted toward tools that isolate and test those mechanics individually.

Design a full AR experience.

Core issues came from system behavior.

Build tools to test individual mechanics.

Early prototypes exposed issues with tracking reliability and alignment, which led to the shift toward tool-based exploration.

Breaking AR into testable behaviors

Instead of treating AR as a single system, I separated it into distinct behaviors. Each tool isolates one mechanic, making it easier to test and compare under different conditions.

Tracking across surface types.

Occlusion using geometry.

Multi-marker stability.

Camera-based effects.

Environmental input.

Analog control mapping.

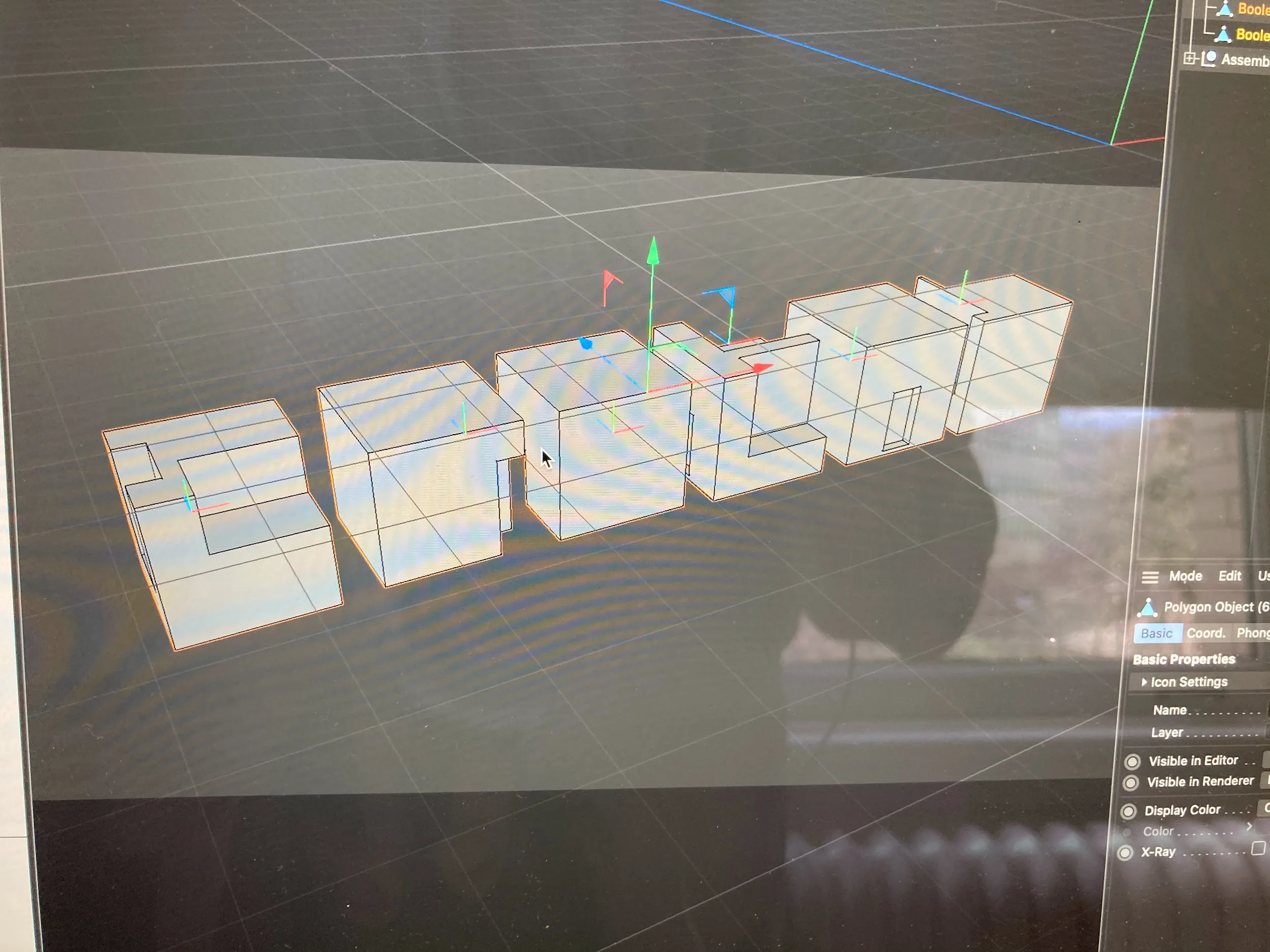

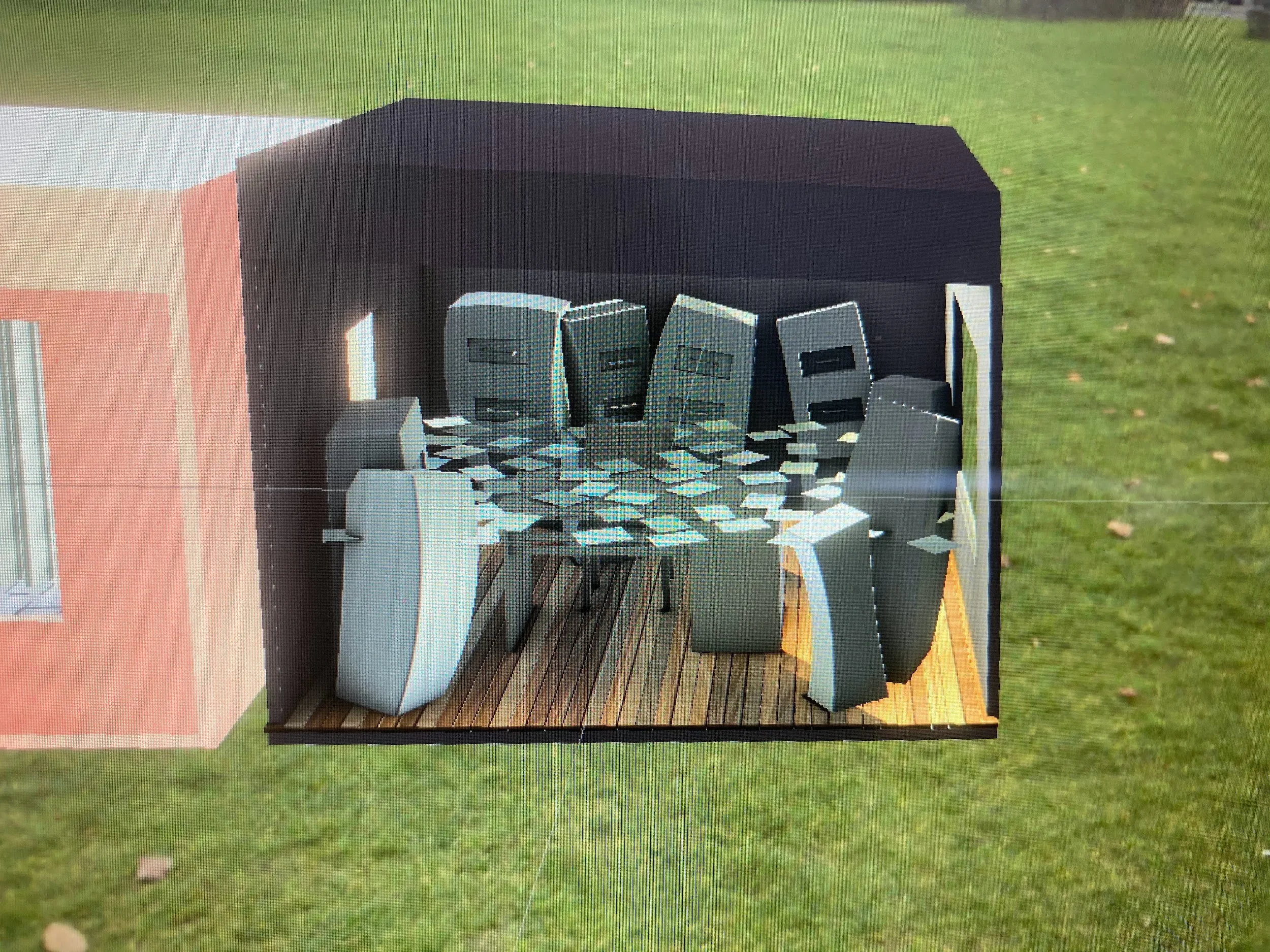

Each tool had a physical and digital architecture

These diagrams document how each tool was constructed and how physical components mapped to AR behaviors.

The tools made AR behavior easier to reason about during design

Instead of discovering issues late in development, I could test tracking, occlusion, and interaction earlier in the process.

This made it easier to design experiences that behaved consistently across different environments and reduced implementation rework.

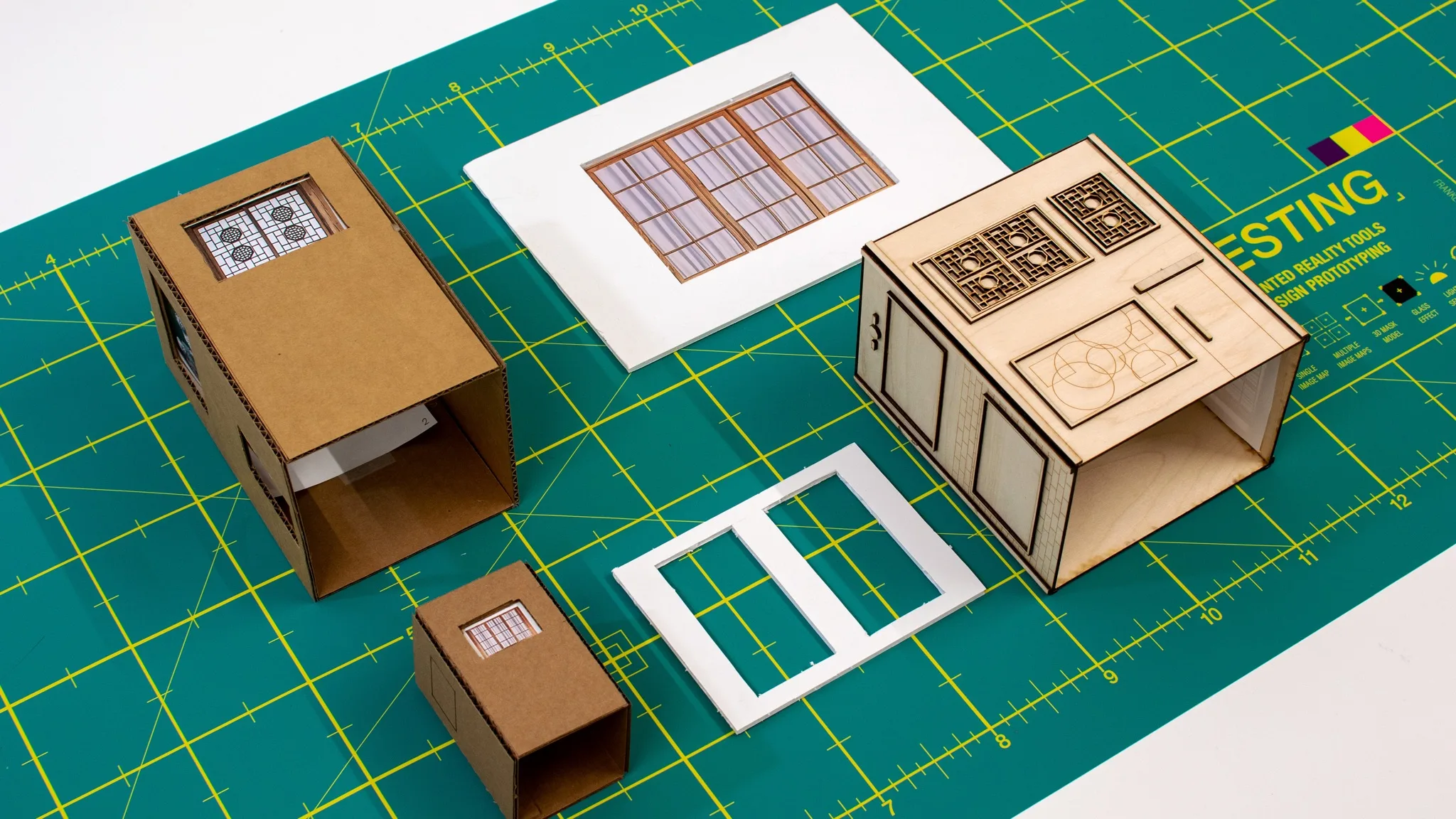

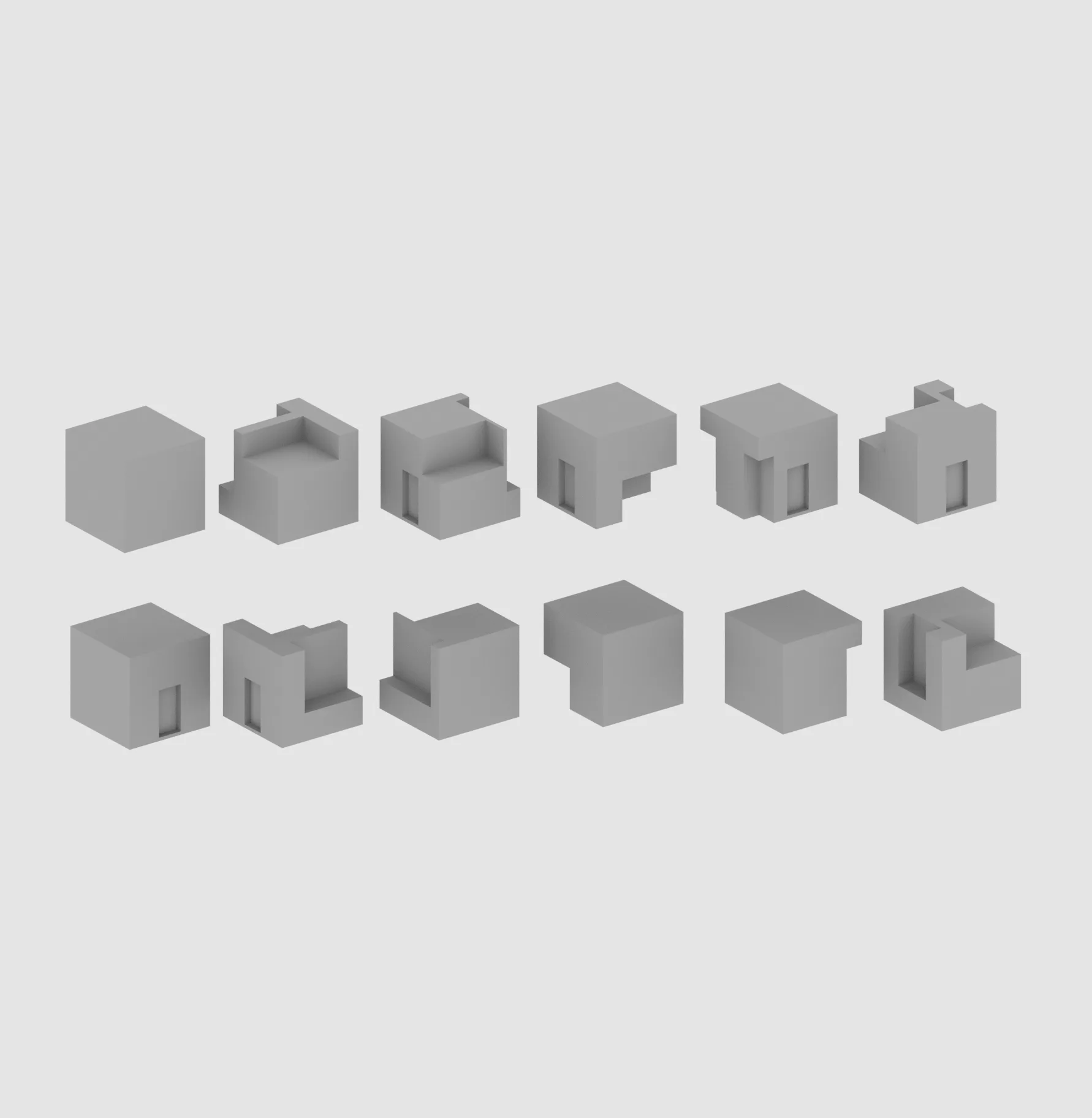

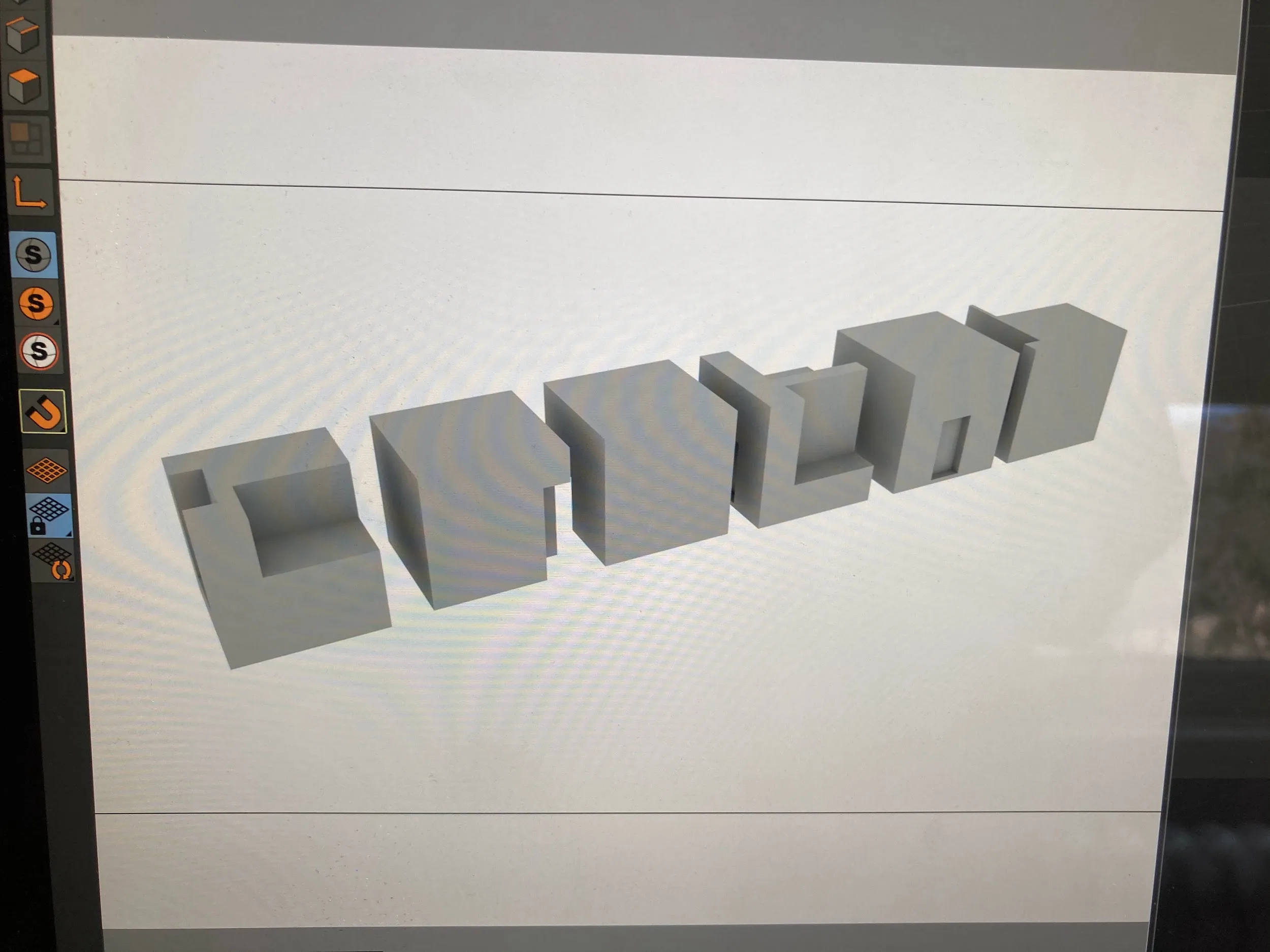

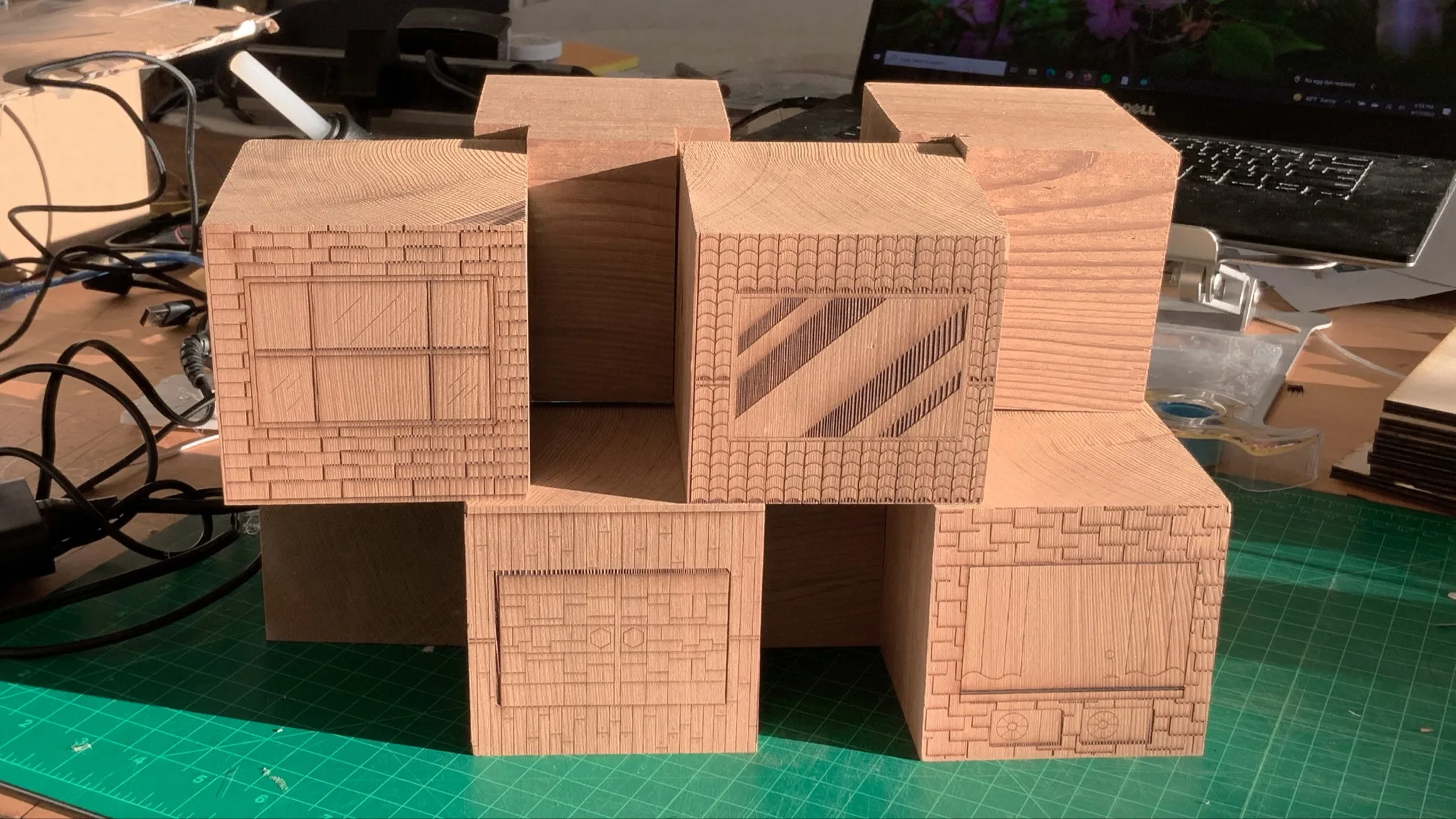

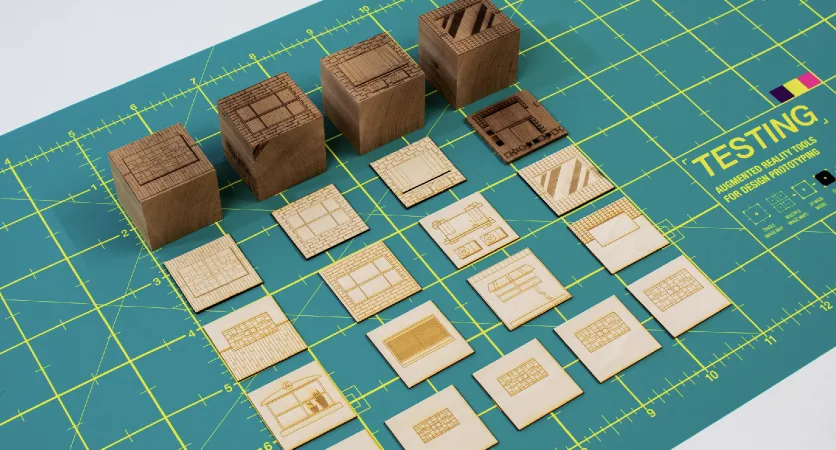

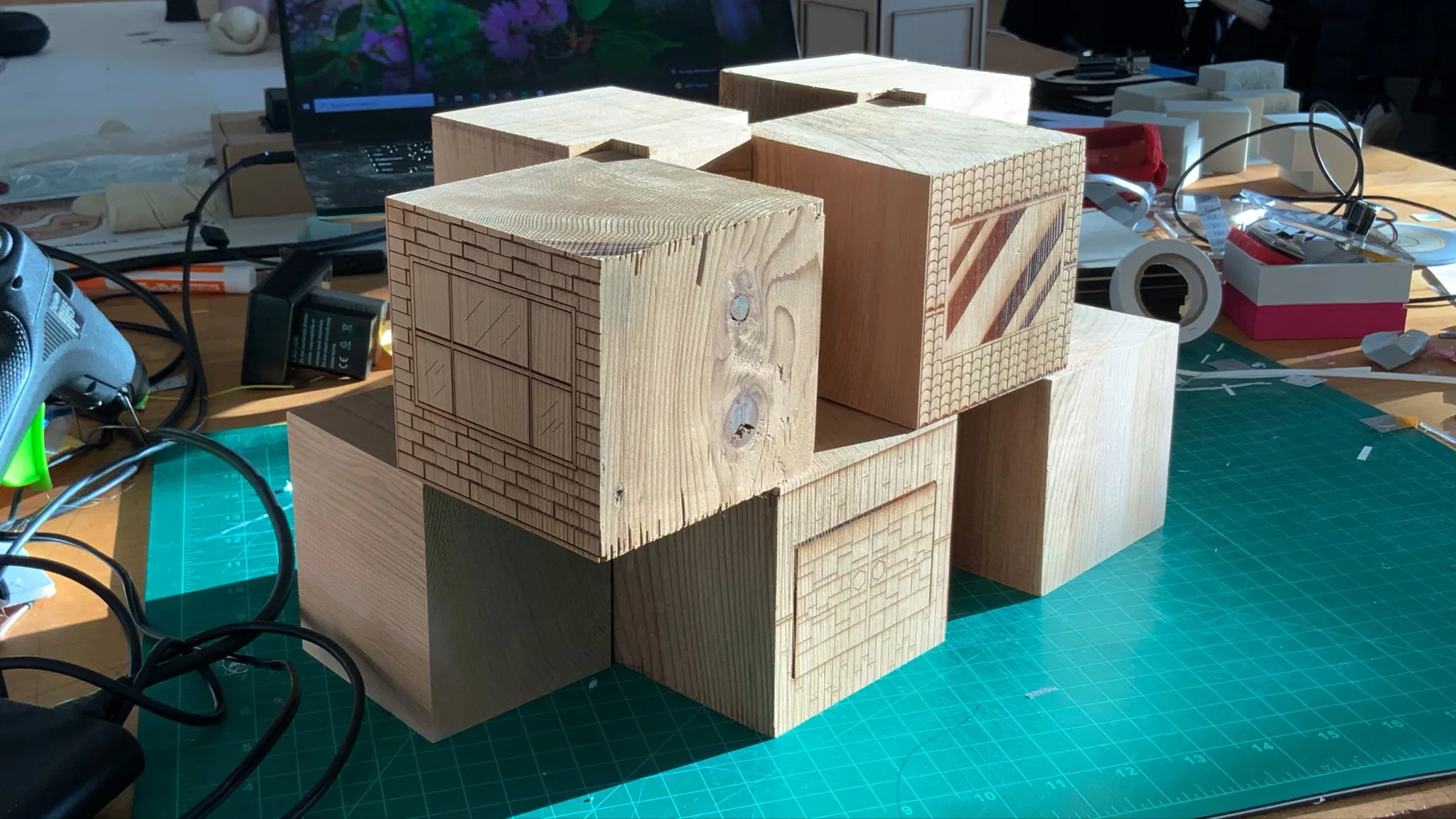

Iteration focused on form, material, and system integration

Most tools went through multiple iterations. Early versions either failed to track reliably or were too sensitive to setup conditions.

Adjusting surface treatments, marker density, and physical dimensions became part of the design process.

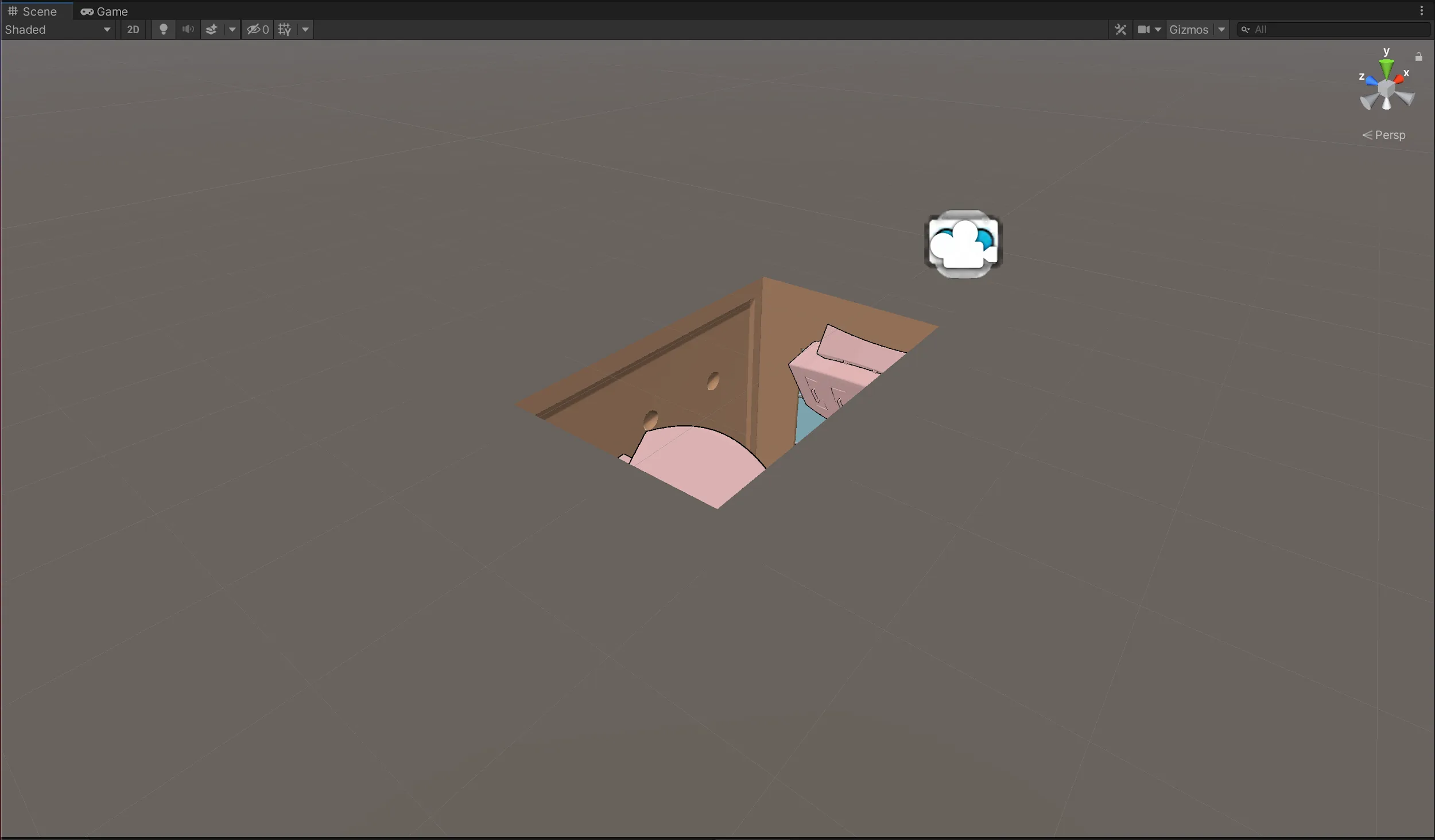

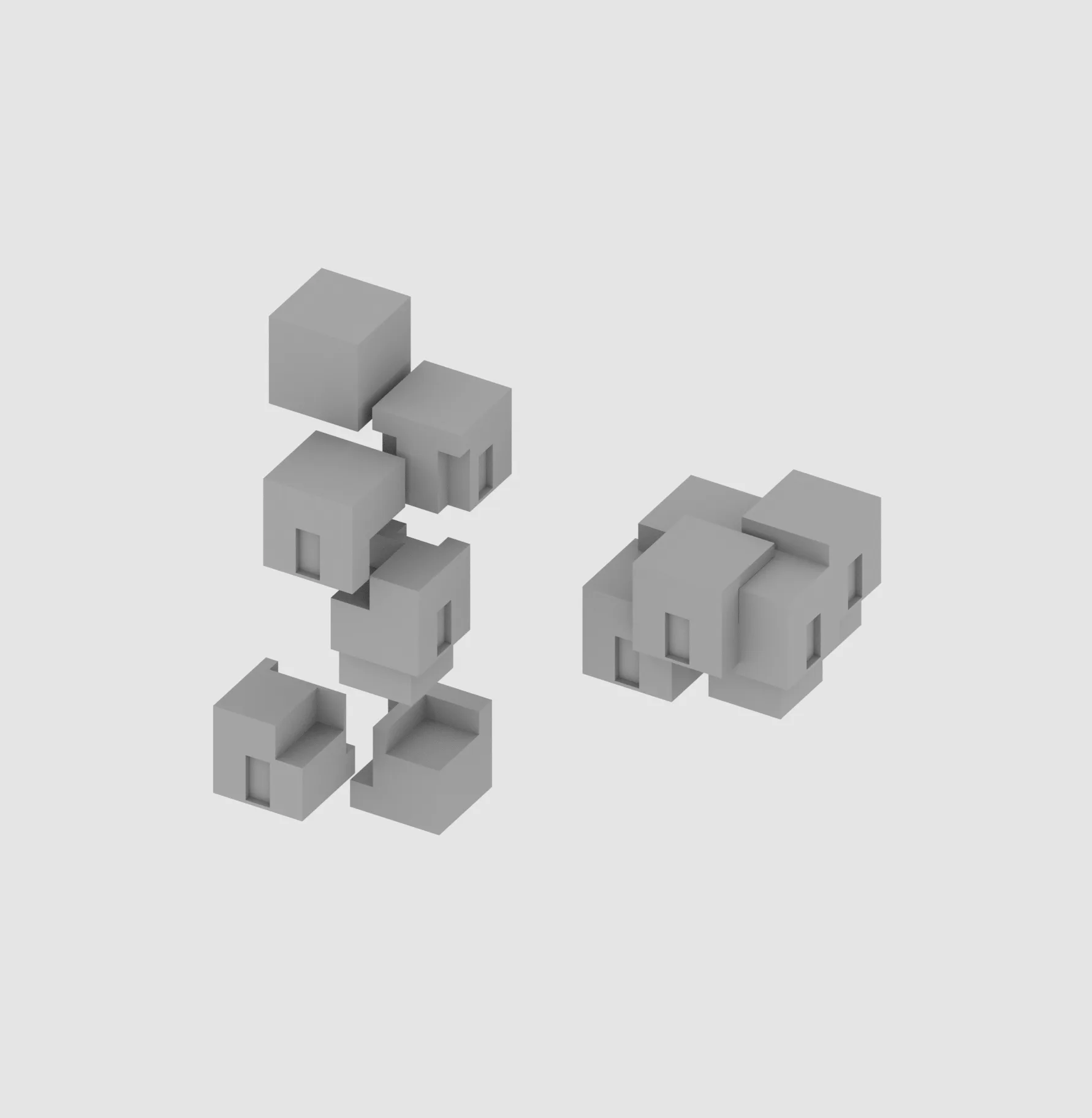

Form studies

Exploring how geometry affects occlusion and tracking stability.

Geometry and modularity

Material tests

Comparing surface finishes to understand their impact on tracking reliability.

Surface and tracking reliability

Assembly

Integrating physical components, image targets, and electronics into working tools.

Building the tools

In use

Testing how the system behaves across different environments and lighting conditions.

AR captures and tests

What I learned from building the system

Tracking performance changed significantly depending on surface finish and contrast.

Occlusion broke when geometry was slightly misaligned, and lighting affected reliability across sessions.

Separating AR behaviors into individual tools made each mechanic easier to understand.

A reusable way to evaluate AR mechanics during design

The system provides a consistent way to test how AR behaves across different conditions. It allows earlier evaluation of constraints and more reliable design decisions.

This project shaped how I approach new systems. I start by testing constraints directly, then design from what holds up in practice.

Want the full walkthrough? Get in touch →